Maintaining human priorities in the face of new technologies always feels like “a rearguard action.” You struggle to prevent something bad from happening even when it seems like it may be too late.

The promise of the next tool or system intoxicates us. Smart phones, social networks, gene splicing. It’s the super-computer at our fingertips, the comfort of a boundless circle of friends, the ability to process massive amounts of data quickly or to short-cut labor intensive tasks, the opportunity to correct genetic mutations and cure disease. We’ve already accepted these promises before we pause to consider their costs—so it always feels like we’re catching up and may not have done so in time.

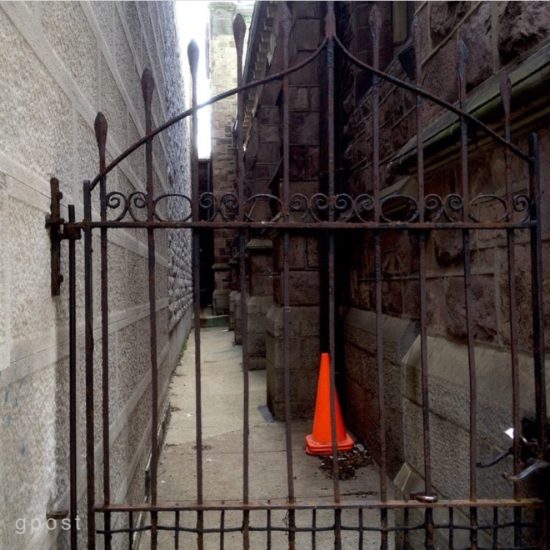

When you’re dazzled by possibility and the sun is in your eyes, who’s thinking “maybe I should build a fence?”

The future that’s been promised by tech giants like Facebook is not “the win-win” that we thought it was. Their primary objectives are to serve their financial interests—those of their founder-owners and other shareholders—by offering efficiency benefits like convenience and low cost to the rest of us. But as we’ve belattedly learned, they’ve taken no responsibility for the harms they’ve also caused along the way, including exploitation of our personal information, the proliferation of fake news and jeopardy to democratic processes, as I argued here last week.

Technologies that are not associated with particular companies also run with their own promise until someone gets around to checking them–a technology like artificial intelligence or AI for example. From an ethical perspective, we are usually playing catch up ball with them too. If there’s a buck to be made or a world to transform, the discipline to ask “but should we?” always seems like getting in the way of progress.

Because our lives and work are increasingly impacted, the stories this week throw additional light on the technology juggernaut that threatens to overwhem us and our “rearguard” attempts to tame it with our human concerns.

To gain a fuller appreciation of the problem regarding Facebook, a two-part Frontline doumentary will be broadcasting this week that is devoted to what one reviewer calls “the amorality” of the company’s relentless focus on adding users and compounding ad revenues while claiming to create the on-line “community” that all of us should want in the future. (The show airs tomorrow, October 29 at 9 p.m. and on Tuesday, October 30 at 10 p.m. EST on PBS.)

Frontline’s reporting covers Russian election interference, Facebook’s role in whipping Myanmar’s Buddhists into a frenzy over its Rohingya minority, Russian interference in past and current election cycles, and how strongmen like Rodrigo Duterte in the Phillipines have been manipulating the site to achieve their political objectives. Facebook CEO Mark Zuckerberg’s limitations as a leader are explored from a number of directions, but none as compelling as his off-screen impact on the five Facebook executives who were “given” to James Jacoby (the documentary’s director, writer and producer) to answer his questions. For the reviewer:

That they come off like deer in Mr. Jacoby’s headlights is revealing in itself. Their answers are mealy-mouthed at best, and the defensive posture they assume, and their evident fear, indicates a company unable to cope with, or confront, the corruption that has accompanied its absolute power in the social median marketplace.

You can judge for yourself. You can also ponder whether this is like holding a gun manufacturer liable when one of its guns is used to kill somebody. I’ll be watching “The Facebook Dilemma” for what it has to say about a technology whose benefits have obscured its harms in the public mind for longer than it probably should have. But then I remember that Facebook barely existed ten years ago. The most important lesson from these Frontline episodes may be how quickly we need to get the stars out of our eyes after meeting these powerful new technologies if we are to have any hope of avoiding their most significant fallout.

I was also struck this week by Apple CEO Tim Cook’s explosive testimony at a privacy conference organized by the European Union.

Not only was Cook bolstering his own company’s reputation for protecting Apple users’ personal information, he was also taking aim at competitors like Google and Facebook for implementing a far more harmful business plan, namely, selling user information to advertisers, reaping billions in ad dollar revenues in exchange, and claiming the bargain is providing their search engine or social network to users for “free.” This is some of what Cook had to say to European regulators this week:

Our own information—from the everyday to the deeply personal—is being weaponized against us with military efficiency. Today, that trade has exploded into a data-industrial complex.

These scraps of data, each one harmless enough on its own, are carefully assembled, synthesized, traded, and sold. This is surveillance. And these stockpiles of personal data serve only to enrich the companies that collect them. This should make us very uncomfortable.

Technology is and must always be rooted in the faith people have in it. We also recognize not everyone sees it that way—in a way, the desire to put profits over privacy is nothing new.

“Weaponized” technology delivered with “military efficiency.” “A data-industrial complex.” One of the benefits of competition is that rivals call you out, while directing unwanted attention away from themselves. One of my problems with tech giant Amazon, for example, is that it lacks a neck-to-neck rival to police its business practices, so Cook’s (and Apple’s) motives here have more than a dollop of competitive self-interest where Google and Facebook are concerned. On the other hand, Apple is properly credited with limiting the data it makes available to third parties and rendering the data it does provide anonymous. There is a bit more to the story, however.

If data privacy were as paramount to Apple as it sounded this week, it would be impossible to reconcile Apple’s receiving more than $5 billion a year from Google to make it the default search engine on all Apple devices. However complicit in today’s tech bargains, Apple pushed its rivals pretty hard this week to modify their business models and become less cynical about their use of our personal data as the focus on regulatory oversight moves from Europe to the U.S.

Technologies that aren’t proprietary to a particular company but are instead used across industries require getting over additional hurdles to ensure that they are meeting human needs and avoiding technology-specific harms for users and the rest of us. This week, I was reading up on a positive development regarding artificial intelligence (AI) that only came about because serious concerns were raised about the transparency of AI’s inner workings.

AI’s ability to solve problems (from processing big data sets to automating steps in a manufacturing process or tailoring a social program for a particular market) is only as good as the algorithms it uses. Given concern about personal identity markers such as race, gender and sexual preference, you may already know that an early criticism of artificial intelligence was that an author of an algorithm could be unwittingly building her own biases into it, leading to discriminatory and other anti-social results. As a result, various countermeasures are being undertaken to minimize grounding these kinds of biases in AI code. With that in mind, I read a story this week about another systemic issue with AI processing’s “explainability.”

It’s the so-called “black box” problem. If users of systems that depend on AI don’t know how they work, they won’t trust them. Unfortunately, one of the prime advantages of AI is that it solves problems that are not easily understood by users, which presents the quandary that AI-based systems might need to be “dumbed-down” so that the humans using them can understand and then trust them. Of course, no one is happy with that result.

A recent article in Forbes describes the trust problem that users of machine-learning systems experience (“interacting with something we don’t understand can cause anxiety and make us feel like we’re losing control”) along with some of the experts who have been feeling that anxiety (cancer specialists who agreed with a “Watson for Oncology” system when it confirmed their judgments but thought it was wrong when it failed to do so because they couldn’t understand how it worked).

In a positive development, a U.S. Department of Defense agency called DARPA (or Defense Advanced Research Projects Agency) is grappling with the explainability problem. Says David Gunning, a DARPA program manager:

New machine-learning systems will have the ability to explain their rationale, characterize their strengths and weaknesses, and convey an understanding of how they will behave in the future.

In other words, these systems will get better at explaining themselves to their users, thereby overcoming at least some of the trust issue.

DARPA is investing $2 billion in what it calls “third-wave AI systems…where machines understand the context and environment in which they operate, and over time build underlying explanatory models that allow them to characterize real word phenomena,” according to Gunning. At least with the future of warfare at stake, a problem like “trust” in the human interface appears to have stimulated a solution. At some point, all machine-learning systems will likely be explaining themselves to the humans who are trying to keep up with them.

Moving beyond AI, I’d argue that there is often as much “at stake” as sucessfully waging war when a specific technology is turned into a consumer product that we use in our workplaces and homes.

While there is heightened awareness today about the problems that Facebook poses, few were raising these concerns even a year ago despite their toxic effects. With other consumer-oriented technologies, there are a range of potential harms where little public dissent is being voiced despite serious warnings from within and around the tech industry. For example:

– how much is our time spent on social networks—in particular, how these networks reinforce or discourage certain of our behaviors—literally changing who we are?

– since our kids may be spending more time with their smart phones than with their peers or family members, how is their personal development impacted, and what can we do to put this rabbit even partially back in the hat now that smart phone use seems to be a part of every child’s right of passage into adulthood?

– will privacy and surveillance concerns become more prevalent when we’re even more surrounded than we are now by “the internet of things” and as our cars continue to morph into monitoring devices—or will there be more of an outcry for reasonable safeguards beforehand?

– what are employers learning about us from our use of technology (theirs as well as ours) in the workplace and how are they using this information?

The technologies that we use demand that we understand their harms as well as their benefits. I’d argue our need to become more proactive about voicing our concerns and using the tools at our disposal (including the political process) to insist that company profit and consumer convenience are not the only measures of a technology’s impact.

Since invention of the printing press a half-millennia ago, it’s always been hard but necessary to catch up with technology and to try and tame its excesses as quickly as we can.

This post was adapted from my October 28, 2018 newsletter.